I'm an MSAI candidate at Kennesaw State University and the founder of Metamorphic Curations, an AI transformation consultancy. My research sits at the intersection of LLM behavior, AI fairness, and governance — the parts of the field where the metric doesn't match reality, and where the fix has to be structural.

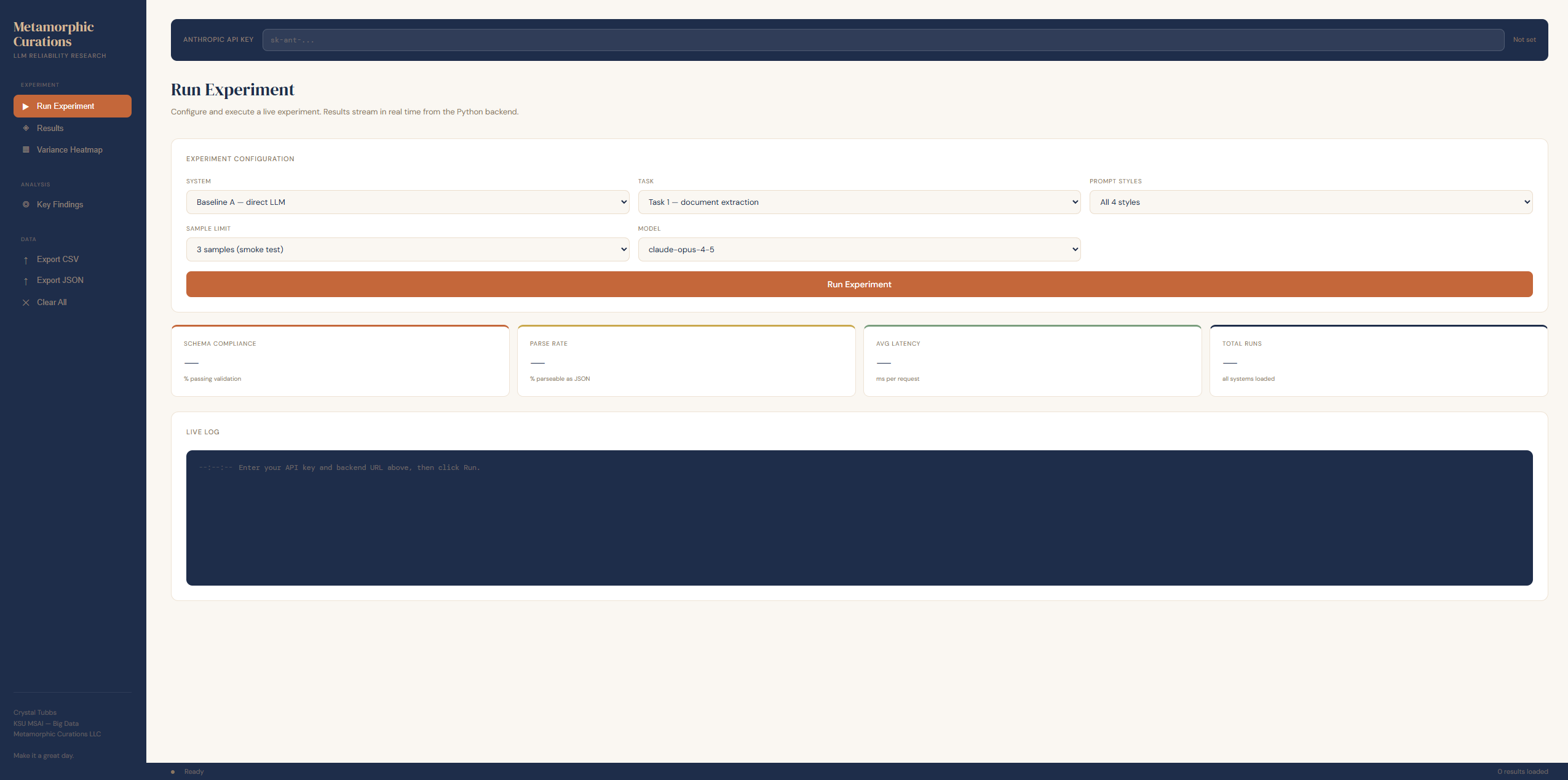

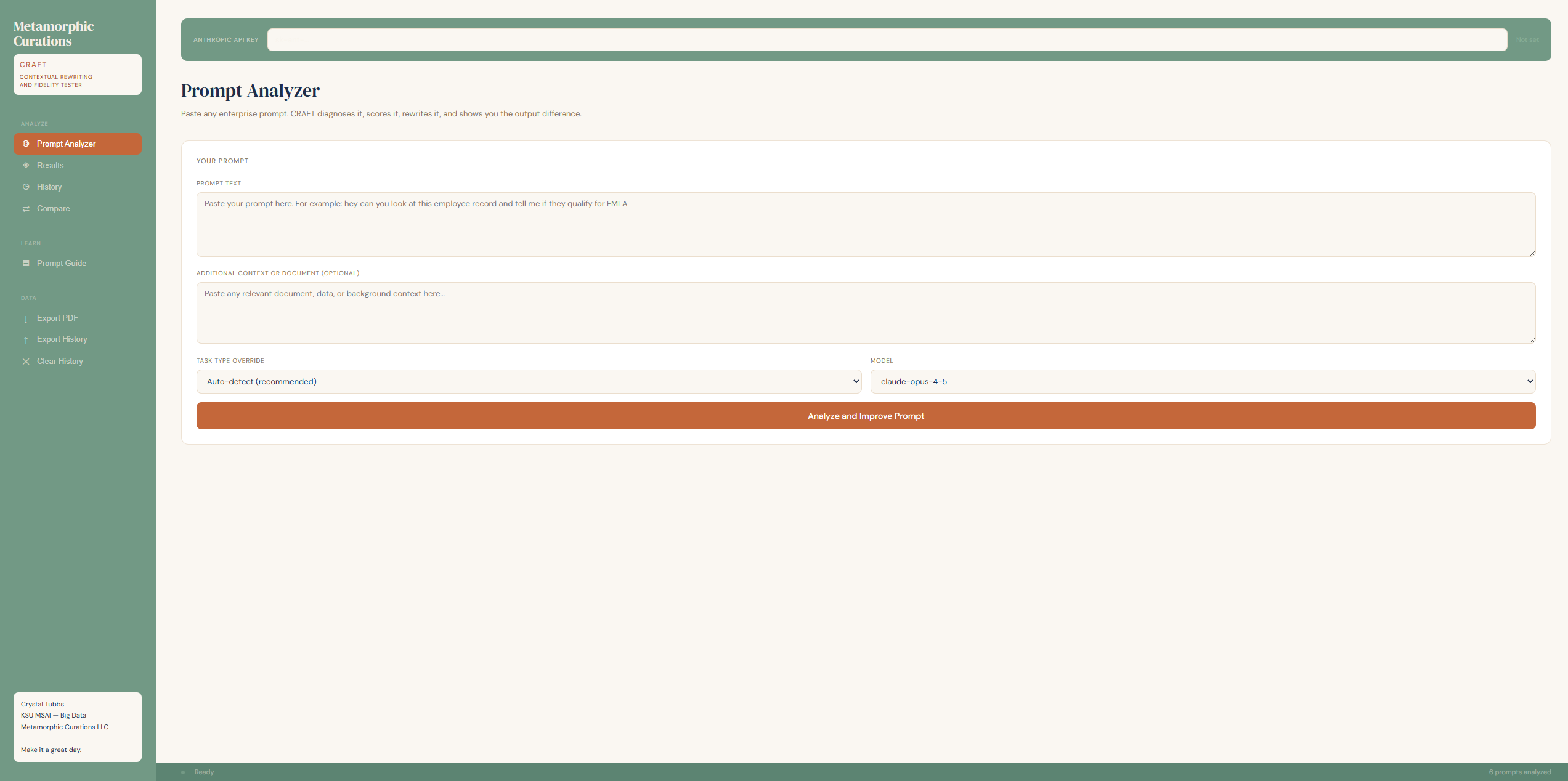

My coursework projects span research on covert bias transfer, surrogate accountability for agentic systems, and context-dependent bias in LLM resume screening alongside a product line that puts that research into builders' hands: the reliability pipeline featured here, CRAFT as its operational surface, and CHRYSALIS as its extension to agentic belief governance.

I build agentic systems and RAG pipelines and advise clients on AI transformation. The thread through all of it is the same: use technology to consciously build a more equitable world.

Governance isn't a cost center. It's a performance multiplier when it operates at the right layer.